The first time most of us became aware of the term “safety driver” was probably March 18, 2018. That was the day a self-driving Uber car plowed into and killed a pedestrian in Tempe, Arizona, while its safety driver was watching a video on her cell phone. The term safety driver had a hollow ring to it after that.

Inside the Cab of an Autonomous Truck

Testing autonomous trucks is more complex than it seems. The men and women sitting in the left seat of the nation's burgeoning fleet of robotic trucks are often called safety drivers. Their responsibilities are far more comprehensive than just keeping the truck out of trouble.

A TuSimple test driver (on the left) and software tech (right seat) pair to both keep the truck out of trouble and to teach the Autonomy how to drive the truck.

TuSimple video screen capture

While the shortcomings of that particular technology and Uber’s approach to development are well documented, it seems that the developers of self-driving heavy trucks take their responsibilities a little more seriously. However, there are few rules and regulations governing the testing of self-driving vehicles on public roads against which their performance can be judged.

The truck and its human occupants remain subject to all the usual regulatory requirements (FMCSRs, etc.) governing heavy trucks, but there are few rules relating to the standards and qualifications of either the person in the left seat (usually called the driver), or the functionality and capability of the sensors, computers and artificial intelligence that control the truck.

The Department of Transportation’s autonomous vehicle guidance documents, "Preparing for the Future of Transportation Automated Vehicles 3.0," and previous versions, speak only to the need to have a “trained commercial driver” behind the wheel at all times. The term “trained” isn't defined.

DOT's AV 3.0 has this to say about safety drivers:

Safety drivers serve as the main risk mitigation mechanism at this stage [testing and development]. Safety-driver vigilance and skills are critical to ensuring safety of road testing and identifying new scenarios of interest.

Usually, in addition to a safety driver, an employee engaged in the ADS (automated driving system) function/software development track is also present in the vehicle.

At least two companies, TuSimple and Waymo Via (Waymo's heavy truck platform), are writing their own playbooks on the roles and responsibilities of safety drivers as they test their automated driving systems on the open road. Both companies offer reassuring accounts of their safety-driver performance criteria and training programs.

Recently, Pima Community College's Center for Transportation Training in Tucson, Arizona, unveiled a training program for autonomous commercial vehicle safety drivers, called the Autonomous Commercial Truck Driver Certificate Program. It’s a work in progress, but the basic curriculum is in place and several students are close to becoming the first graduates of the program.

The actual role of the safety driver is quite different than what you might expect. There’s more to it than meets the eye.

The Safety Driver's Role

Referring to the left-seat occupants as simply safety drivers would be a mistake. Their responsibilities are far more comprehensive than just keeping the truck out of trouble. In fact, after we completed our interview with Waymo CDL driver and training instructor, Jon Rainwater, a Waymo spokesperson asked if we would consider using a different descriptor.

“While the term ‘safety driver’ is widely used in the industry and media, from a Waymo perspective we prefer ‘trained driver’ or ‘test driver,’” the spokesperson wrote. “We think it's more all-encompassing of their role and leans into the fact that they are highly trained.”

After learning more about the left-seater’s role, we agreed. Preventing calamity is only a small part of the test driver's job.

Waymo Via safety driver trainer, Jon Rainwater, inspects one of the sensors on the truck prior to a trip.

Photo: Waymo Via

Rainwater, 61, was a military intelligence analyst during the Cold War, who got into trucking when the need for his expertise dried up in the early 19902. More than five years ago he was recruited by TransDev to join the Waymo development team as a test driver. He became a test-driver instructor about six months ago. (TransDev recruits and manages trained drivers for Waymo’s testing and development operations for passenger cars and heavy trucks.)

A typical day on public roads with The Waymo Driver (a term Waymo uses to describe its AI-powered autonomous driving system) begins with detailed briefings on the day’s work plan as well as reviewing notes from the previous day's post-trip briefing. An enormous amount of data is uploaded from the truck, essentially a complete data and visual log of the trip, which is reviewed by a team of engineers and compared to the test driver's observations.

There may have been instances where the Waymo Driver did something that the test driver may or may not have done, or may have done differently, such as changing lanes to avoid a vehicle stopped on the shoulder of the road. Such discrepancies are flagged at the time by the software technician onboard with the test driver and reviewed by engineers overnight. The next day, that incident will be reviewed and discussed by a group of engineers and drivers. If engineers make any changes to the programming, the driver is alerted prior to beginning the day's work.

Following the briefings, the test driver conducts the usual pre-trip inspection required of all CDL drivers, in addition to cleaning and inspecting all the cameras, lidar and other sensors. Meanwhile, the software technician conducts a thorough systems check. Preparations for the daily run can take several hours.

The test drivers are paired with software technicians who, in most cases, are not CDL holders. It’s that person’s responsibility to monitor the computer side of the operation.

“Typically, the driver focuses on the truck, what it’s doing, how it’s reacting, etc., while the software tech focuses on what the software is doing,” Rainwater says. “There's a great deal of communication between the tech and the driver as we're travelling. It’s not a silent show.”

Choreographed Conversation

The interaction between the two occupants of the cab is choreographed and very disciplined. They operate as a team, with the person in the left seat watching outside the truck through the windows and mirrors while the software tech watches a computer-generated rendering of the environment on a laptop computer screen.

“The left-seater is responsible for constant observation of the environment around the vehicle, assuring that the actual vehicle environment matches what the person in the right seat observes on screen,” says TuSimple Chief Product Officer Chuck Price.

The dialogue between the driver and the software tech is reminiscent of the activity on the flight deck of a commercial airliner

The dialogue between the driver and the software tech is reminiscent of the activity on the flight deck of a commercial airliner, where the pilot-observing calls out altitudes, headings, air speed, etc. to the pilot-flying. It follows a script, usually in some lingo or verbal shorthand they both understand.

“The right-seater may say, for example, 'left corner pocket,' meaning there's a vehicle at the left-rear-corner of the truck. The left-seater will check the mirror and confirm there is a vehicle in that location,” says Price.

As Rainwater says, there’s a constant dialogue between the two humans onboard, one observing, the other confirming, and if ever there’s a disagreement or one or the other isn’t happy with what they see, either one can disengage the Autonomy (the onboard AI control system), simply by saying disengage. At that point the test driver assumes manual control of the truck.

“Some people might be under the assumption that the test driver is just sitting there waiting for the truck to make a mistake, but it’s quite the opposite,” Rainwater says. “As the driver, I don’t have to worry about keeping the truck centered in the lane or maintaining a constant speed. The Autonomy does that. I’m constantly looking outside, predicting what the truck’s next move will be and comparing that to what the tech tells me the truck is going to do. I’m constantly and highly engaged in the process. I’m ready to take over in a split-second if I have to.”

Driving, but Not Driving

Price, who happens to be a pilot, has pulled some of aviation's discipline and decision-making protocols into the Autonomy development at TuSimple. The driver in the left seat, referred to at TuSimple as the left-seater, is the one in command, the one who make the final decision and is responsible for the outcome.

“We’re very careful in the protocol that the right-seater does not try to take over command of the vehicle or order the left-seater to do certain things,” Price says. “The right-seater [the software tech] makes only recommendations. The left-seater makes the decisions. We believe that enhances safety because there's no confusion.”

“Some people might be under the assumption that the test driver is just sitting there waiting for the truck to make a mistake, but it’s quite the opposite."

For compliance purposes, the left-seater logs in as “driving” on the electronic logging device when the truck is in motion, but Price says about 90% of that time is spent observing, rather than actually steering and gearing the truck.

“The human driver is making decisions on whether or not it’s appropriate to continue a particular behavior, or should we make a different choice?” he says.

The technology is pretty mature now, so routine maneuvers such as merging with traffic from an on-ramp, passing, lane changes, etc., require only oversight and observation, and very seldom any intervention. But always, the right-seater is head-down watching the computer screen and calling out what the Autonomy is seeing and how it’s reacting. In most cases the left-seater just verbally confirms what the right-seater sees.

There are cases where the left-seater might not agree with the Autonomy's assessment of the situation, and so is obliged to speak up. Those instances are flagged and reviewed by the team after the trip. They usually are not life-and-death decisions, but they could be.

“We could have a situation where, for example, we see a car coming up fast behind us, and like any driver, we want to watch that vehicle to see what it does,” Rainwater says. “The software tech watches the event unfold on screen, tracked by the truck, while I'm watching the mirrors. I’d have my hands soft-gripping the steering wheel and my foot hovering over the brake in case that car decided to cut in front of the truck trying to make it off an exit ramp – as often happens. My instinct might be to apply the brakes to give the car some room, but the objective is to see how the Autonomy will handle the situation.”

Then you have what are called “edge cases,” where the environment becomes less predictable, such as when driving amongst impaired or distracted drivers. The test drivers are always looking for bad behavior on the road around them – even the presence of emergency vehicles.

“If bad actors show up around our vehicle, we generally discontinue testing,” Price says. “It’s not appropriate on a busy public highway to be mixing up with someone who is who is really not paying attention. It’s dangerous for reasons that are out of our control. We’re quite conservative in our on-road testing. It’s just part of our responsibility.”

Behind the Scenes

In addition to their on-road duties as noted above, test drivers spend a lot of time on the test track, out driving around alone and consulting with the engineers. The test track is where they run unique scenarios to develop the capability of the Autonomy. Waymo has a 91-acre testing facility called Castle in Atwater, California, two hours southeast of San Francisco. The site is basically a model city with all the typical urban infrastructure. Both cars and trucks are tested there in some strange situations that challenge the Autonomy’s reactions.

“We had a scenario once where somebody jumped out of a canvas bag,” says Rainwater. “Another time we had a skateboarder ride past the Waymo Driver lying down on the skateboard rather than standing up. The purpose is to give artificial intelligence something different to contend with.”

TuSimple safety driver trainer Randy Redwine.

Photo: TuSimple

Similarly, TuSimple's test drivers run through a lot of edge-case scenarios at various test tracks around the country.

TuSimple also does a lot of what it calls shadow testing, where the test driver just drives around with the Autonomy running, but not controlling the vehicle.

“The Autonomy is making all the decisions it would normally make if it was operating the vehicle, and it's all being recorded, but we're also recording what the driver actually did,” Price says. “That gives us a constant stream of what the driver did versus what the Autonomy would have done, providing a very rich data set of how aligned the virtual driver is with the human driver – or how divergent it is. That allows us to do very deep training of our autonomous decision-making.”

Test drivers also spend a lot of time working one-on-one with the engineering teams, explaining human reactions to various situations, which helps the engineers improve the artificial intelligence. For this, drivers are trained on how the various systems function and how AI works so they can explain their understanding of the real world to the engineers in terms they can understand. Hours are spent reviewing logged instances from previous trips where some incongruity appeared, with the drivers explaining their perspective to the engineers. There’s a learning curve there for the test drivers, getting them up to speed on some of the engineering and computer terminology and jargon the engineers use.

Hiring and Training Test Drivers

Bringing on new test drivers is a challenge all the autonomous companies presently face as testing operations ramp up and some get closer to deployment. TuSimple and Waymo both said their rejection rate is very high, on the order of 80-90%, even for drivers with 10 and 20 years of experience. It’s not only a proven safety record they are looking for, though that’s a large part of it – they also are looking for the ability to follow instructions, demonstrate consistent driving behavior, and to be conversant in the language of the engineers.

Price says TuSimple looks for driver with lots of experience and “pristine” safety records, and they also do extensive background checks looking at the types of company applicants have worked for in the past.

“We look at the training you’ve been through and the [companies] you've come from to understand, did you get safety training, was that a reputable fleet?” he says. “We ask our drivers to operate the vehicle in conformance with our performance standards, so if you’re an aggressive lane changer and weave in and out of traffic, you’re not going to be a TuSimple driver.”

A TuSimple safety driver does a pre-trip inspection

Photo: TuSimple

The companies are trying to get their trucks to behave on-road the same way the best human drivers would. The human test drivers, in addition to preventing calamity in the roles as safety drivers, are in fact teaching the Autonomy while they drive, by reacting or not reacting to what the truck does in certain situations. The artificial intelligence learns from experience, so the broader context it gets, the better it will be, presumably.

Consider this scenario: When merging onto a highway, drivers in the main traffic flow legally are supposed to maintain a constant speed and let the merging driver weave themselves into the traffic flow. But if you’re strictly conforming just to the traffic laws, you’re not being polite, as Price puts it.

“There are a lot of social cues that both drivers have to be attentive to, such as jockeying for position. You have to be a part of that,” says Price “We spend a tremendous amount of time developing the social behaviors of the Autonomy so that it blends well with the other traffic in those situations, and our drivers are heavily involved in that.

“There are some cases where the Autonomy has to follow very explicit rules, and there are other cases where the Autonomy is developing based on statistical observation,” Price explains. “It’s an artificial-intelligence-based system that also has to make decisions filtered through another less explicit set of rules such as driving behavior [patterns].”

All that to say, being a veteran driver with an excellent safety record isn’t enough to guarantee you a job with some autonomous truck developers. A significant part of the job is interacting with the computers and the engineers. That training would be company-specific and provided by the employer after all the basic hiring requirements have been met.

The development team at the Pima Community College Center for Transportation Training, who recently created the autonomous vehicle driver and operations specialist program.

Photo: Pima Community College

To help prospective test drivers with those requirements, Pima Community College's Center for Transportation Training in Tucson recently created an autonomous vehicle driver and operations specialist program. The college already had a CDL training program in place, and they have the appropriate computer sciences and a few other related disciplines. However, they all sit within different academic silos. From the academic perspective, that meant pulling several deans and reporting lines into one multidisciplinary program (which doesn't happen a lot in academia). Still, they managed to pull a program together, with support and encouragement (and maybe a little coercion) from TuSimple.

Missy Blair, advanced program manager at Pima Community College's Center for Transportation Training, says the program was built from scratch based on what TuSimple wanted in its test drivers.

“We listened to what business and industry said they needed,” she says. “When we went into this, we thought we would go down a diesel pathway. But since the applicants already have driving experience, they don't really need to learn about trucks. TuSimple was looking for better verbal and written communication skills because they’ll be documenting what this truck is doing. And since they will be talking to engineers, the drivers need to understand the vocabulary and how some of the systems and sensors work. So, we went more in that direction.”

The course outline includes courses in industrial safety, electrical systems, introduction to autonomous vehicles, computer hardware components, and transportation and traffic management – far too much to describe here.

Graduates receive a certificate upon completion and up to 12 college credits. At this point, there’s no discussion of making such a course mandatory for test drivers, nor is there any talk of possibly adding an endorsement to the CDL for autonomous driving, but all that could change in time.

Taking the course at Pima Community College won’t guarantee applicants a job with TuSimple, but it won’t hurt. Pima isn’t currently closely involved with any of the other AV companies now operating in Arizona, but Blair says the door is open and she hopes there will be some uptake there.

“We’ve talked with a couple of other companies that are still figuring out how [this program] would work with their model and the training they are already doing,” Blair says. “Right now, it’s just TuSimple, but they’ve always been very open to us working with other autonomous vehicle companies. Of course, we're open to that also.”

Blair says they have 12 students currently enrolled in the program, with several close to graduating.

Waymo Via recently announced it would begin hiring test drivers, through TransDev, for its new Dallas division. The list of requirements and prerequisites is extensive.

Waymo's Rainwater did not go deeply into that company's expectations except to say, "Transdev has stringent hiring criteria that includes a clean driving record."

He did say that one of the challenges, at least in the early stages of the training process, is to get the test drivers to react instinctively and to know how far to let the Autonomy go before intervening. Those two imperatives, seemingly at odds, are fundamental to the test driver’s job.

“Developing those instincts isn't difficult, but the reaction to take over when someone else is driving isn't always automatic,” he says. “To get them started, we put them out in the cars first on our test track in a very controlled environment and we introduce faults to the driving scenario, such as having the car pull off to the right or something like that. They develop their instincts over time so that they can take over in a split second.”

TuSimple starts its new-hires out on the track as well, after a review of their work history, background checks, an extensive on-road evaluation and a personal interview.

“Our autonomous driver training program, which is itself extensive, weeds out drivers that can’t wrap their heads around it sufficiently for us,” Price says. “We'll take drivers out to the track and inject faults and see how they respond.”

Drivers need to be able to observe not only the vehicle, but what the Autonomy is doing to the vehicle – whether it's in line with what it's supposed to be doing, or if it's not behaving appropriately – and they have to recognize that immediately. If they aren't capable of doing that, or because they tend to be inattentive drivers, they don’t make the cut.

“It usually takes a solid six months or more for a driver to reach a point where we trust them in the left seat operating the Autonomy,” he says. “They have to go through a very extensive set of set of experiences before they can do that.”

So, for all those requirements and qualifications, how’s the pay? The ad Waymo placed recently through Transdev for drivers based in the Dallas had a starting pay listed at $29 per hour with “great benefits.” Neither Price nor Rainwater spoke specifically about pay and benefits for this story except to say it’s better than drivers usually see on over-the-road operations and the benefits are second to none.

With two extensively trained humans in the cab, both observing the environment and Autonomy's responses and predictions, the chances of having an incident similar to the Uber one seem remote – but not impossible. Things can happen very quickly at 65 mph, even with four or five seconds of look-ahead time. The Autonomy has to make the right call, and two humans have to agree. And all that might have to happen in a split second.

Heavy Duty Trucking Equipment Editor Jim Park was honored with a 2021 Jesse H. Neal Award in the Best Technical/Scientific Content category for his coverage of autonomous truck technology. The entry included this article.

More Safety & Compliance

Nominations Open for HDT Truck Fleet Innovators 2026

Heavy Duty Trucking is searching for forward-looking leaders at trucking fleets as nominations for HDT’s Truck Fleet Innovators 2026. Deadline is May 15.

Read More →

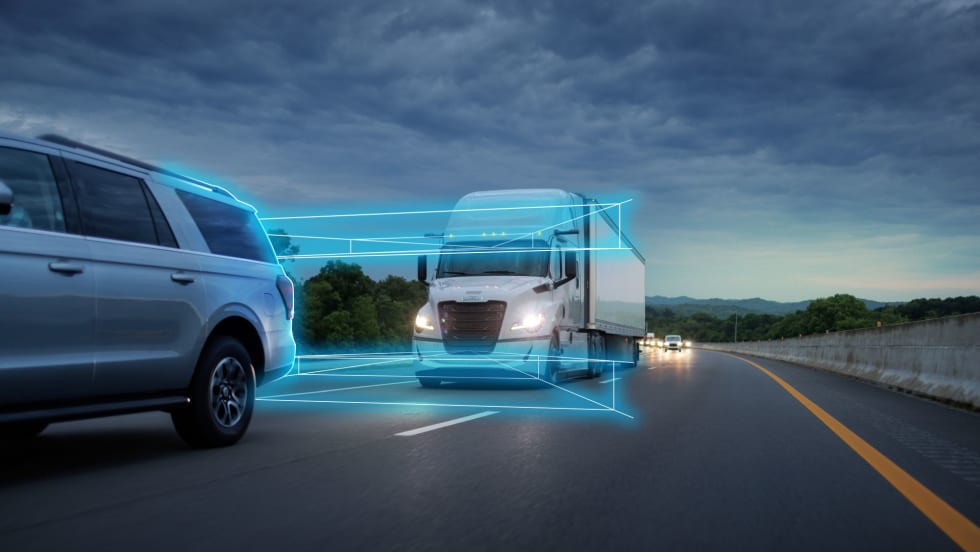

Freightliner Expands Detroit Assurance with New Intersection and Turning Safety Tech

Detroit’s next-generation ABA6 safety system adds cross-traffic detection and enhanced side guard assist with left-turn protection, targeting high-risk urban scenarios.

Read More →

'Beyond Compliance,' Regulations, Driver Coaching on ATRI’s 2026 Research List

The American Transportation Research Institute will examine driver coaching, regulatory impacts — including the "Beyond Compliance" concept —and weather disruptions that shape trucking operations.

Read More →

FMCSA Revamps DataQs to Improve Fairness, Speed of Reviews

New requirements add firm deadlines and independent review steps, addressing long-standing complaints about inconsistent rulings and slow response times.

Read More →

FMCSA Extends Paper Medical Card Exemption … Again

Five states still aren't ready to accept commercial driver medical exam information directly from the medical examiner's registry.

Read More →

HDT Honors the Best New Products of 2025 at TMC [Photos]

Heavy Duty Trucking's Top 20 Products awards recognize the best new products and technologies. Check out the award presentations at the 2026 Technology & Maintenance Council annual meeting.

Read More →

Detroit Engines: Trusted Performance, Built for What's Next

The Detroit® Gen 6 engine platform proves that real progress doesn’t require a complete redesign. Built on 20 years of trusted technology, these engines are designed for efficiency, stronger performance, and greater reliability than before. And they do it all while complying with 2027 EPA standards on every mile.

Read More →

Aperia Expands Halo Platform with Steer-Tire Inflation System, Fifth-Wheel Integration

Aperia Technologies introduced a new automatic tire inflation system for steer axles and a partnership with Fontaine Fifth Wheel to integrate coupling status into its Halo Connect platform.

Read More →

Fleetworthy and HAAS Alert Expand Partnership Stopped Truck Protection Alerts

Fleetworthy and HAAS Alert expanded their partnership to deliver real-time digital alerts that warn motorists when commercial trucks are stopped roadside and notify truck drivers when approaching emergency responders.

Read More →

New Entrants, Chameleon Carriers, and Safety: Is It Too Easy to Start a Trucking Company?

More than 100,000 new trucking companies enter the industry each year, but regulators manage to audit only a fraction of them. That churn creates opportunities for inexperienced startups — and for “chameleon carriers” that shut down after safety violations and reappear under new identities. Read more from Deborah Lockridge in this commentary.

Read More →