I take it back. Way back. In my May column I wrote about the Arizona fatality in March involving an Uber vehicle in autonomous mode. The facts were “a bit sketchy,” I admitted, but suggested that “in a sense they don’t matter. It’s about the optics.”

Commentary: The Fatal Uber Crash Revisited

Armed with more information, executive contributing editor Rolf Lockwood revisits the fatal crash of an autonomous Uber vehicle in Arizona to determine who or what was really at fault.

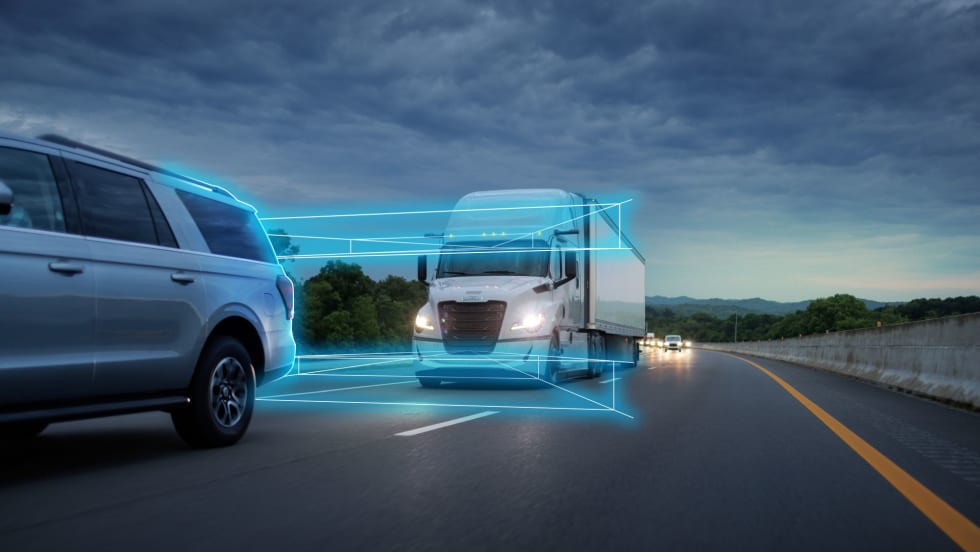

With more information, Rolf revisits the fatal crash of an autonomous Uber vehicle in Arizona. Police say the driver could have seen the victim and stopped the Volvo some 43 feet before hitting them if she’d been paying attention.

Photo via NTSB

Well, it certainly was about the optics in the larger context of social acceptance in the autonomous world. And they were undeniably bad, even though it was the first fatal accident involving what people call a ‘robot’ vehicle.

“I’d venture a guess that autonomy actually has little to do with this accident, that nothing could have prevented the woman’s death,” I wrote back in May. “There simply wasn’t time for any reaction, human or otherwise.”

But there actually was time, apparently, according to a 300-page report released by the Tempe Police Department in late June. And in fact the report blames the crash on distracted driving. Sound familiar?

To remind you of the circumstances, the Uber vehicle — a Volvo XC90 SUV — was doing 44 mph on a multi-lane roadway at night, apparently in Level Four autonomous mode, and simply failed to “see” Elaine Herzberg crossing the road while walking her bicycle. She was not at a crosswalk, jaywalking in other words. A so-called “backup” human driver — Rafaela Vasquez — was present, though not actively driving. Worse than that, the police report says the driver was watching ‘The Voice’ on a cell phone, and in the 20 minutes or so before the crash, her eyes were off the road some 32% of the time.

The driver in this case, and it’s clear in a video the police released on Twitter, saw the woman crossing the road only half a second before impact. The car did not brake at all.

Tempe Police Vehicular Crimes Unit is actively investigating

the details of this incident that occurred on March 18th. We will provide updated information regarding the investigation once it is available. pic.twitter.com/2dVP72TziQ— Tempe Police (@TempePolice) March 21, 2018

But police say she could have seen the victim 143 feet away and stopped the Volvo some 43 feet before hitting Herzberg if she’d been paying attention.

“This crash would not have occurred if Vasquez would have been monitoring the vehicle and roadway conditions and was not distracted,” the report stated.

Confusing the issue, the vehicle’s native collision-avoidance system had been partly disabled, according to a report by the National Transportation Safety Board. It “saw” Herzberg with six seconds to spare but did not automatically apply the brakes as it would ordinarily do. Nor did it issue a warning to the driver. The automatic braking function was turned off, the NTSB report said, “to reduce the potential for erratic vehicle behavior.” It depended on human intervention. And therein lies Uber’s big mistake, it would seem, alongside an apparent failure to screen its “backup” drivers effectively.

So I was pretty much correct in writing back in May that autonomy itself wasn’t to blame here, rather its management. What I didn’t see was the egregious human error at play.

The most glaring lesson, as if we need to hear it again, is that even a couple of seconds of distraction can be deadly. This is the most dramatic example we might imagine, but in the end it came down to seconds. Like the time it takes to check text messages on your own cell phone.

More Safety & Compliance

Avoiding Winter Pileups: Don’t Become the Next Link in the Crash-Chain

Winter roadway “pileups” aren’t one crash — they’re a chain reaction. Here’s what triggers them, how truck drivers can spot the danger early, and what to do if you're suddenly trapped in the mess.

Read More →

FMCSA’s Motus System Is Coming. What Fleets Need to Know Now

The long-awaited registration system promises a single portal — and tighter fraud controls.

Read More →

Nominations Open for HDT Truck Fleet Innovators 2026

Heavy Duty Trucking is searching for forward-looking leaders at trucking fleets as nominations for HDT’s Truck Fleet Innovators 2026. Deadline is May 15.

Read More →

Freightliner Expands Detroit Assurance with New Intersection and Turning Safety Tech

Detroit’s next-generation ABA6 safety system adds cross-traffic detection and enhanced side guard assist with left-turn protection, targeting high-risk urban scenarios.

Read More →

'Beyond Compliance,' Regulations, Driver Coaching on ATRI’s 2026 Research List

The American Transportation Research Institute will examine driver coaching, regulatory impacts — including the "Beyond Compliance" concept —and weather disruptions that shape trucking operations.

Read More →

FMCSA Revamps DataQs to Improve Fairness, Speed of Reviews

New requirements add firm deadlines and independent review steps, addressing long-standing complaints about inconsistent rulings and slow response times.

Read More →

FMCSA Extends Paper Medical Card Exemption … Again

Five states still aren't ready to accept commercial driver medical exam information directly from the medical examiner's registry.

Read More →

HDT Honors the Best New Products of 2025 at TMC [Photos]

Heavy Duty Trucking's Top 20 Products awards recognize the best new products and technologies. Check out the award presentations at the 2026 Technology & Maintenance Council annual meeting.

Read More →

Detroit Engines: Trusted Performance, Built for What's Next

The Detroit® Gen 6 engine platform proves that real progress doesn’t require a complete redesign. Built on 20 years of trusted technology, these engines are designed for efficiency, stronger performance, and greater reliability than before. And they do it all while complying with 2027 EPA standards on every mile.

Read More →

Aperia Expands Halo Platform with Steer-Tire Inflation System, Fifth-Wheel Integration

Aperia Technologies introduced a new automatic tire inflation system for steer axles and a partnership with Fontaine Fifth Wheel to integrate coupling status into its Halo Connect platform.

Read More →