We can’t afford to be afraid of change, especially not these days when anything seems possible – but we also can’t afford to be cavalier about the path we take in finding new and better ways to do things.

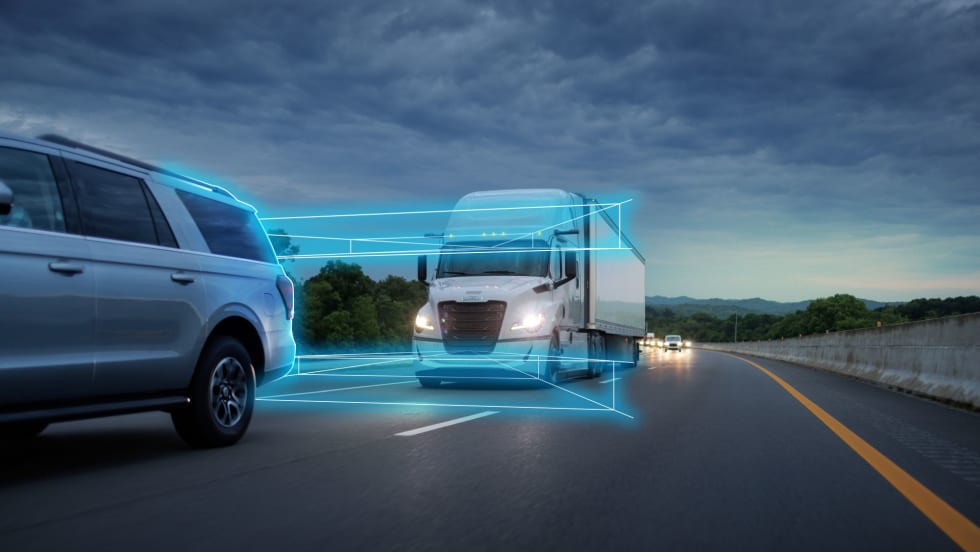

Commentary: Are We Moving Too Fast on Autonomous Vehicles?

We can’t afford to be afraid of change, especially not these days when anything seems possible – but we also can’t afford to be cavalier about the path we take in finding new and better ways to do things. Commentary by Executive Contributing Editor Rolf Lockwood.

Rolf Lockwood

Case in point: the testing and occasional use of autonomous and semi-autonomous vehicles on public roads. So far it’s been mostly cars, but heavy trucks are out there pretty often, too. And in a couple of recent cases, mayhem ensued.

First we had the Arizona fatality involving an Uber car in autonomous mode. The facts are a bit sketchy, but in a sense they don’t matter. It’s about the optics.

The vehicle was doing 40 mph, 5 mph under the speed limit, apparently in Level Four autonomous mode, and simply failed to “see” a woman crossing the roadway at night. She walked into the car’s path, allegedly jay-walking, according to published reports. A driver was present, though not actively driving. Confusing the issue somewhat, the vehicle’s proprietary collision-avoidance system had apparently been turned off in favor of Uber’s own technology.

I’d venture a guess that autonomy actually had little to do with this accident, and that nothing could have prevented the woman’s death. There simply wasn’t time for any reaction, human or otherwise.

More recently, a California man in a Tesla X running in Autopilot mode died when the car drove itself straight at one of those awful concrete lane dividers. The man’s hands had apparently been off the wheel for at least six seconds, despite warnings from the car. Did its systems fail? Or is this essentially a new variation on the theme of driver error? It’s not clear.

Regardless, whatever trust had been built up in the idea of vehicular automation has been severely damaged by these incidents. That was bound to happen at some point, but Uber was probably right to suspend its autonomous testing after the Arizona tragedy, even though I don’t think its autonomous technology failed. Optics again.

The public seems to have little confidence in the autonomous idea in cars, and a lot less when it comes to trucks. It will take time to restore the average person’s willingness to entertain the concept of vehicles driving themselves. Confidence in the idea? Think at least a decade or two.

There’s no surprise there, and this really isn’t a setback for proponents of automation, because it was never going to be a slam dunk. The technology is well advanced (though clearly imperfect), but the social and legal aspects of this were always going to be the bigger challenges by a very wide margin. In a sense, then, nothing has changed, despite these fatalities that have drawn so much public attention.

Not surprisingly, there are an increasing number of calls for more rigorous testing of autonomous technology on test tracks before such vehicles are let loose on public roads. California, on the other hand, is steadily making public testing easier.

So who’s right? Should we be more cautious than we’ve been so far? I tend to think so, not just because of the recent fatalities. I simply think we’re moving too fast.

I’m certainly not afraid of change, and not of the autonomous one in particular, but I really do think we’re being cavalier. It forces me to ask: What’s the rush?

Related: Uber Suspends Self-Driving Tests After Vehicle Kills Pedestrian

More Safety & Compliance

Avoiding Winter Pileups: Don’t Become the Next Link in the Crash-Chain

Winter roadway “pileups” aren’t one crash — they’re a chain reaction. Here’s what triggers them, how truck drivers can spot the danger early, and what to do if you're suddenly trapped in the mess.

Read More →

FMCSA’s Motus System Is Coming. What Fleets Need to Know Now

The long-awaited registration system promises a single portal — and tighter fraud controls.

Read More →

Nominations Open for HDT Truck Fleet Innovators 2026

Heavy Duty Trucking is searching for forward-looking leaders at trucking fleets as nominations for HDT’s Truck Fleet Innovators 2026. Deadline is May 15.

Read More →

Freightliner Expands Detroit Assurance with New Intersection and Turning Safety Tech

Detroit’s next-generation ABA6 safety system adds cross-traffic detection and enhanced side guard assist with left-turn protection, targeting high-risk urban scenarios.

Read More →

'Beyond Compliance,' Regulations, Driver Coaching on ATRI’s 2026 Research List

The American Transportation Research Institute will examine driver coaching, regulatory impacts — including the "Beyond Compliance" concept —and weather disruptions that shape trucking operations.

Read More →

FMCSA Revamps DataQs to Improve Fairness, Speed of Reviews

New requirements add firm deadlines and independent review steps, addressing long-standing complaints about inconsistent rulings and slow response times.

Read More →

FMCSA Extends Paper Medical Card Exemption … Again

Five states still aren't ready to accept commercial driver medical exam information directly from the medical examiner's registry.

Read More →

HDT Honors the Best New Products of 2025 at TMC [Photos]

Heavy Duty Trucking's Top 20 Products awards recognize the best new products and technologies. Check out the award presentations at the 2026 Technology & Maintenance Council annual meeting.

Read More →

Detroit Engines: Trusted Performance, Built for What's Next

The Detroit® Gen 6 engine platform proves that real progress doesn’t require a complete redesign. Built on 20 years of trusted technology, these engines are designed for efficiency, stronger performance, and greater reliability than before. And they do it all while complying with 2027 EPA standards on every mile.

Read More →

Aperia Expands Halo Platform with Steer-Tire Inflation System, Fifth-Wheel Integration

Aperia Technologies introduced a new automatic tire inflation system for steer axles and a partnership with Fontaine Fifth Wheel to integrate coupling status into its Halo Connect platform.

Read More →