How do you know you can trust a robot? That’s the question tackled by IEEE in its new ethics certification program for autonomous systems.

Commentary: The Ethics of Autonomous Vehicles

When we’re talking about autonomous vehicles, the ethics questions are many, and developing a single ethical standard may be harder than it sounds.

When we’re talking about autonomous vehicles, the ethics questions are many.

Photo: Jim Park

IEEE stands for the Institute of Electrical and Electronics Engineers. It describes itself as a global technical professional organization “dedicated to advancing technology for humanity.”

When we’re talking about autonomous vehicles, the ethics questions are many. How do we program the car or truck to act if a crash into a crowd of people is imminent? Do we program it to protect the passengers or protect the crowd? What if there is only one bystander in danger, but the car holds four passengers? What about a mother dog and her litter of puppies in the road?

I recently heard an interview on public radio’s Marketplace with IEEE Executive Director John Havens, where he referred to the “trolley problem,” a classic thought experiment in ethics. You see a runaway trolley moving toward five tied-up people lying on the tracks. You are standing next to a lever that controls a switch. If you pull the lever, the trolley will be redirected onto a side track, and the five people on the main track will be saved. However, there is a single person lying on the side track. You have two options:

Developing a single ethical standard might be harder than it sounds, when you think about the varying ethics among the human beings who are programming or riding in these vehicles.

Photo: HDT File

1. Do nothing and allow the trolley to kill the five people on the main track.

2. Pull the lever, diverting the trolley onto the side track, where it will kill one person.

By doing nothing, you are not actively doing anything that will harm another person. By flipping the switch, you save five people but actively do something that results in a death. There are many variants, including one where the single person on the side track is a loved one of the person making the decision. As you might imagine, when the people involved are strangers, the vast majority of us would say that you should divert the trolley. When the person who would be killed is a loved one, however, the number drops.

Developing a single ethical standard might be harder than it sounds, when you think about the varying ethics among the human beings who are programming or riding in these vehicles.

Let’s take how we treat animals as an example. On one end of the spectrum, there are people who believe under no circumstances is it ethical to consume or use an animal-based product, not even Worcestershire sauce containing trace amounts of anchovy paste. Some even believe it’s unethical to keep animals as pets. On the other end of the scale are big-game trophy hunters who believe it’s ethical to hunt endangered species, because the fees they pay to do so go to conservation efforts. And of course there are nearly as many positions in between as there are people.

Adding to the confusion is that ethics vary by culture and geography. For us in the West, many of our ethical beliefs derive from the ancient Greek philosophers. But in other parts of the world, Havens noted, there are things called Confucian ethics or Ubuntu ethics.

In his book, “Heartificial Intelligence,” Havens explores the question: “How will machines know what we value, if we don’t know ourselves?”

The ethics question is a key part of the public acceptance of automated vehicles. Transportation Secretary Elaine Chao said in a keynote address last summer at the Automated Vehicles Symposium, “Without public acceptance, automated technology will never reach its full potential.”

When developing ethical standards for autonomous technologies, “the logic is to write out all the potential scenarios that could happen,” IEEE’s Havens told Marketplace. “And the logic is you never want to have to make those types of choices. But right now, a lot of times those conversations happen outside the context of how many humans unfortunately are killed with cars as they are right now.”

This is the logic behind the drive toward more automated vehicle technologies: Flawed they may be, but they’re still going to make fewer mistakes than human drivers, who may be tired, distracted, or just not very good drivers.

And that is the logical conclusion. But humans aren’t always logical.

More Safety & Compliance

Avoiding Winter Pileups: Don’t Become the Next Link in the Crash-Chain

Winter roadway “pileups” aren’t one crash — they’re a chain reaction. Here’s what triggers them, how truck drivers can spot the danger early, and what to do if you're suddenly trapped in the mess.

Read More →

FMCSA’s Motus System Is Coming. What Fleets Need to Know Now

The long-awaited registration system promises a single portal — and tighter fraud controls.

Read More →

Nominations Open for HDT Truck Fleet Innovators 2026

Heavy Duty Trucking is searching for forward-looking leaders at trucking fleets as nominations for HDT’s Truck Fleet Innovators 2026. Deadline is May 15.

Read More →

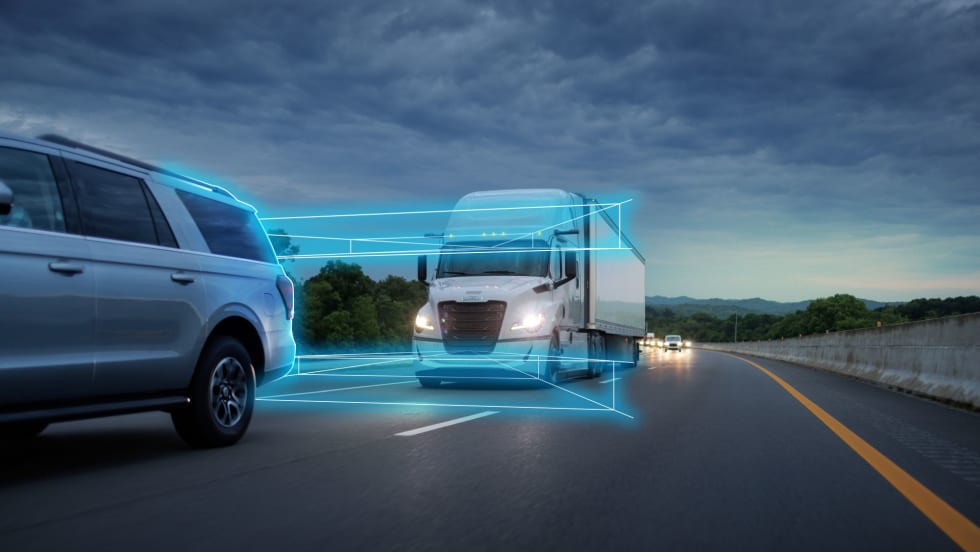

Freightliner Expands Detroit Assurance with New Intersection and Turning Safety Tech

Detroit’s next-generation ABA6 safety system adds cross-traffic detection and enhanced side guard assist with left-turn protection, targeting high-risk urban scenarios.

Read More →

'Beyond Compliance,' Regulations, Driver Coaching on ATRI’s 2026 Research List

The American Transportation Research Institute will examine driver coaching, regulatory impacts — including the "Beyond Compliance" concept —and weather disruptions that shape trucking operations.

Read More →

FMCSA Revamps DataQs to Improve Fairness, Speed of Reviews

New requirements add firm deadlines and independent review steps, addressing long-standing complaints about inconsistent rulings and slow response times.

Read More →

FMCSA Extends Paper Medical Card Exemption … Again

Five states still aren't ready to accept commercial driver medical exam information directly from the medical examiner's registry.

Read More →

HDT Honors the Best New Products of 2025 at TMC [Photos]

Heavy Duty Trucking's Top 20 Products awards recognize the best new products and technologies. Check out the award presentations at the 2026 Technology & Maintenance Council annual meeting.

Read More →

Detroit Engines: Trusted Performance, Built for What's Next

The Detroit® Gen 6 engine platform proves that real progress doesn’t require a complete redesign. Built on 20 years of trusted technology, these engines are designed for efficiency, stronger performance, and greater reliability than before. And they do it all while complying with 2027 EPA standards on every mile.

Read More →

Aperia Expands Halo Platform with Steer-Tire Inflation System, Fifth-Wheel Integration

Aperia Technologies introduced a new automatic tire inflation system for steer axles and a partnership with Fontaine Fifth Wheel to integrate coupling status into its Halo Connect platform.

Read More →