Autonomous trucks behave in a surprisingly similar manner to human drivers. They observe, they measure, they attempt to predict the behavior of vehicles around them, and they are constantly planning alternative strategies, all while maintaining their present course.

Building an Autonomous Truck Driver

Autonomous trucks learn and think and adapt to situations just like human drivers, but they do it faster, and they never tire or become distracted. At least that’s the promise. Learn more about the technology behind autonomous trucks.

Daimler Trucks North America, which is working with autonomous-tech companies such as Torc Robotics and Waymo, recently showed off its second-generation autonomous Freightliner Cascadia. Note the sensor clusters on the front bumper and above the windshield and doors.

Photo: Jack Roberts

The better human drivers do that, too. However, lesser drivers, or those distracted or fatigued, might miss one of those steps. The result can be a crash. That’s why supporters say robotic trucks, because they never tire or get distracted, can reduce crash rates significantly.

To drive as well as humans, robotic trucks must be able to see as well as humans and make the right decisions all the time. How does that work?

Situational awareness begins with the truck’s ability to assess its surroundings.

Robotic truck companies have developed perception systems that enable the truck to “see” its surroundings. Baked-in high-resolution maps, GPS location data and inertial sensors tell the truck where it is and how fast it’s going. That awareness of itself is complemented by perception technology that informs the artificial-intelligence-based “driver” on what’s going on around it: where other vehicles are, how fast they are travelling relative to the truck, what physical obstacles exists, and what possible threats may be present.

Waymo’s autonomous truck project uses cameras and radar as well as a lidar system of its own design.

Photo: Jim Park

“We have various types of sensors that can see the world in different ways, and they complement each other in a variety of ways to get a sense of what’s around us,” says Andrew Stein, a staff software engineer at Waymo and one of the lead engineers on the company’s autonomous trucking project. “We also have the ability to decide what all those things we’re sensing are going to do next. Our next job is to figure out — based on where I am, what I’m seeing, and what everybody’s about to do — is what should I do?”

First, the trucks need to “see” the world around them. The keys here are diversity and redundancy, and an overlap in the data collected so the AI driver can compare one to another to verify if what the truck is seeing is real and correct.

Three sets of eyes

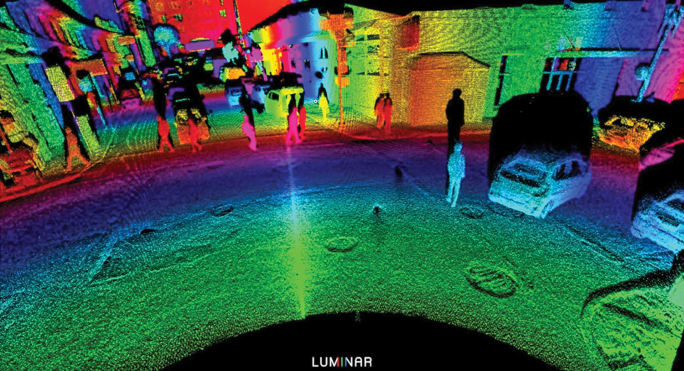

To do this, autonomous trucks use cameras, radar, and lidar. Together they provide a “spatial” representation of the truck’s surroundings and detect objects near and far from it. This fusion of three types of sensor input enables the truck to determine speed and distance of objects and vehicles, down to a centimeter in some cases. Trained machine learning algorithms then help the truck to determine what it is seeing, such as other cars, trucks, pedestrians, animals, guard rails, signs, etc.

A visual representation of what lidar sees. Lidar can detect large and small, moving or stationary objects. The bands of color represent distance from the device.

Photo: Luminar Technologies

“We use three different categories of sensors to support the perception systems,” says Ben Hastings, chief technology officer at Torc Robotics. “Cameras see the world similarly to how people perceive the world. They can see visible light and different colors and things like traffic signs and lane lines. And again, similar information to how you see the roads when you’re driving down the highway.”

The ability to recognize color is important for determining if a traffic light is red, yellow or green, for example.

Cameras are placed all around the truck, often with overlapping fields of view. Some are wide-angle to get the big picture. Others are more narrowly focused for longer-range visuals. Super-high-resolution cameras make object detection and differentiation possible at a range of distances, but they cannot provide accurate distance information. They have other limitations too, such as low-light conditions or darkness, fog, rain, snow, or if the lens is otherwise obscured. If the camera is blocked, it can’t produce an image.

“We also use radars and lidars,” says Hastings. “These are active sensors, meaning they don’t rely just on ambient light. They emit their own energy. Radar and lidar are similar in principle, but they produce very different returns.”

Lidar, which is a sort-of acronym for “light detection and ranging,” uses one or multiple laser beams to sweep across the environment. These laser beams reflect off of other vehicles, pavement, surrounding buildings, people. etc., and distance measurement can be obtained based on the amount of time it takes from when that laser is fired to when the reflection comes back.

The reflected beams produce a high-definition, three-dimensional “point cloud” from which the AI can deduce objects and most importantly, distance and speed. These point clouds are often represented visually as images in shades of red, green, yellow and blue, which represent varying degrees of reflectivity, but the lidar does not see the world in color, only in varying degrees of reflectivity.

“Lidar is very high resolution, which produces a very accurate representation of the shapes surrounding the truck,” Hastings says.

But like cameras, lidar uses light, in the form of a laser beam. It’s outside the visual spectrum, but it’s still light. Therefore it’s limited by line-of-sight and to some extent inclement weather or hazy conditions where the laser beams can be scattered by particles suspended in air, as in fog or smoke.

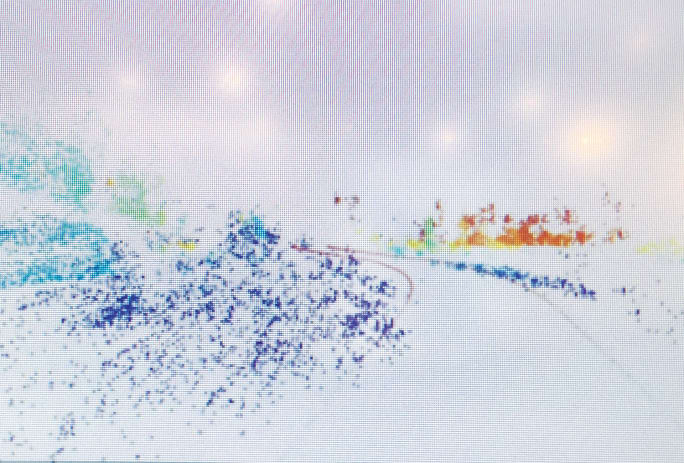

This image represents a radar point cloud, showing return from a radar device. Each point is a metallic surface. Radar can very accurately measure distance and directionality, but it doesn't produce a visually recognizable image. Radar can tell you in a single measurement how fast an object is moving relative to the sending unit.

Photo: Jim Park

Radar, on the other hand, is not affected by visual barriers. The third leg of the autonomous diversity and redundancy stool, radar produces an electromagnetic signal which, like lidar, is emitted and reflected back to the sensor. It can very accurately measure distance and directionality, but it doesn’t produce a visually recognizable image.

“Since we’re not bats, we’re not used to thinking about this kind of reasoning,” says Waymo’s Stein. “But radar is good at measuring distance as well as velocity. Two of the really key things about radar are its ability to measure speed or velocity directly. You don’t have to watch a thing over time and figure out how it’s moving. Radar can tell you in one measurement how fast the thing is going.”

The thing to remember is the complementary nature of these three sensors, he adds. “They’re good at different things and they overlap in some ways so there’s a form of redundancy, but also an ability to really cover the various challenges that we have in capturing everything we can.”

Artificial intelligence

The truck wouldn’t have a clue what to do with all this input without a set of instructions and something to guide it on its journey. Companies such as Waymo use terms such as “the Waymo Driver” to describe the artificial intelligence that is making the mile-by-mile, second-by-second driving decisions. We’ll call it the AI driver.

The robotic truck companies have been mapping various routes for several years, producing highly detailed maps in visual, infrared (lidar) and electromagnetic (radar) formats for the AI driver to use as a reference.

In addition to the high-resolution maps, the AI driver has been loaded with thousands of actual images: cars and trucks, people and animals, signs and roadway infrastructure, and just about anything one can imagine seeing along a highway. Like human drivers, the AI driver learns what these things are through the imagery and other inputs, so when it “sees” one of them along a road it will have an idea what to expect from it. The AI driver will know that stop signs aren’t likely to dart out into the street, whereas small humans (children) are likely to behave less predictably.

In other words, the AI driver learns, like human drivers do. One of the advantages AI has over humans is that it can learn faster. Engineers can simulate situations that can run in fractions of a second in AI time but might take several minutes in real time. Through repeated simulations and analysis of the outcome, the engineers teach the AI how to “drive.” This includes everything from discerning how much torque to apply to the steering shaft to get the truck to change lanes, to preparing to stop if it sees a ball bounce out onto a road or the door on a parked car open.

Predicting the future

“We try to detect what’s out there, where it is around us, and what we think it is, but that only gives you a snapshot of either what’s just happened or what is happening at a moment,” says Waymo’s Stein. “What you want to know is what’s about to happen. As a driver, you’re always trying to predict what’s going to happen, even if you’re not explicitly doing so in your head. You are looking and thinking ahead. That’s what AI does, too.”

And it’s not just observation. Reasoning is part of the equation, too.

“Part of the advances of AI machine learning over the last couple of decades is being able to learn, not only what’s present in an image, or perceptually, but behavior of the world and to predict what might happen next,” Stein says. “The system is constantly predicting, and not just one thing. It doesn’t think, ‘Okay, here’s what’s going to happen,’ because it doesn’t know. It’s asking, ‘What are all the things that could happen,’ and then it starts its reasoning about a variety of possible scenarios and how likely it thinks they all are.”

Robotic truck company TuSimple displayed this truck at the CES electronics show in Las Vegas in 2019. Cameras are located above the cab and on the side of the sleeper. The lidar devices are on the hood and the radar device is on the bumper. Together, the array provides a complete view of the area around the tractor with object-detection and tracking capabilities.

Photo: Jim Park

A rubber ball alone poses no threat to a truck, but the reasoning that a child could dart out unseen from behind a parked car in pursuit of the ball might cause the truck to ease up on the throttle in preparation for a sudden stop.

Hazard recognition

One of the reasons heavy trucks in freeway operations are likely to commercialize at scale before passenger cars is the “relatively predictable” environment in which they operate. Compared to a city street, limited-access freeways are quite tame, especially in rural settings. Not much happens beside or behind the truck — usually.

But consider what Daimler and Torc call the “lost cargo scenario.” An object suddenly appears in the middle of the road, something that may have fallen off a truck, or a chunk of peeled-off tire tread, or an animal struck by a previous vehicle. At night, such an object would be more difficult to detect. The lidar may or may not catch it. It would depend on the level of reflectivity of the object. Unless it was metallic, the radar might not detect it either, and cameras would be of limited use in the dark. It might be easier to see in daylight, but if the AI truck is following another truck, it would have limited forward visibility even with lidar.

“We call this a lost-cargo scenario in our development phase, and there is no easy answer to this, because the number of permutations that can result from that is huge,” says Suman Narayanan, director of engineering in the Autonomous Technology Group at Daimler Trucks North America. “This scenario — and of course there are lots of variables — is one we are training the AI on. The best way we can do that is get out there, put more test trucks with safety drivers, and learn from how much we train the system.”

For every imaginable situation, there might be several correct responses leading to a successful outcome, or perhaps a very few, or even none. Narayanan notes that human drivers with all their experience take action based on the circumstances.

“It might be safer to stay on course than to swerve or change lanes,” he says. “The alternatives could pose more danger than staying the course. In most cases, slowing down gives us time to assess the situation, and like humans, the computer also benefits from time.”

Locomation is taking a slightly different approach to automation: human-guided automation. The company believes it can bring automation to the market sooner if it’s less reliant on technology that requires AI and machine learning and a vast array of sensors. A human driver operates the lead truck, while a lighter degree of automation keeps the rear truck in a safe following position.

Photo: Locomation

In such a scenario, the AI driver doesn’t cycle through a list of pre-programmed options, such as “refrigerator in the middle of the road: plan A, change lanes to the right, plan B, change lanes to the left.” They are trained to think like humans, cycle through the options based on the present situation (traffic in the left lane, can’t go that way) and hopefully choose the safest option.

While it might take a human driver a second or two to even realize what’s happening and respond, usually by hitting the brakes, the AI driver can recognize the hazard within hundredths of a second and take action, possibly applying the brakes. The difference in that time interval can mean the difference between a catastrophe and a bruise.

This article originally appeared in the March print edition of Heavy Duty Trucking.

More Equipment

New High-Horsepower Natural Gas Engine Could Expand Fleet Options

Westport and Volvo are demonstrating a 500-hp truck with diesel-like efficiency — one that also offers what Westport says is a better pathway to using hydrogen fuel in trucks.

Read More →

Hirschbach Announces Plan to Deploy 500 Aurora Autonomous Trucks

Hirschbach and Aurora Innovation have inked a non-binding deal outlining a path to deploy 500 Aurora Driver-powered trucks into fleet operations.

Read More →

Bosch, Kodiak AI Advance Toward Scaled Production of Autonomous Truck Hardware

New sensor integrations and component validation signal a shift from strategy to execution as Kodiak and Bosch push toward high-volume driverless truck deployment.

Read More →

Great American Trucks: REO

The evolution of the modern truck was a long, slow affair. But perhaps no other company did more to establish the template for what a modern truck should be, and how it should perform, than REO.

Read More →

Western Star Doubles Down on Driver Pride With 2026 Star Nation Experience

Western Star has expanded its operator-focused Star Nation competition and outreach to spotlight skill, attract new drivers, and strengthen industry ties.

Read More →

Is the All-New VNR Volvo's Safest Truck Ever?

The all-new Volvo VNR is jam-packed with advanced safety features. Join HDT for a first-hand look at how Volvo is keeping drivers safer and productive on the road.

Read More →

Volvo Redesigns the VNR With Drivers and Tight Turns in Mind

At Volvo’s New River Valley customer center, the all-new VNR proves that maneuverability, safety, and driver confidence can coexist in a regional-haul workhorse.

Read More →

FTR: Trailer Orders Jump in March, but Demand Still Lags

March trailer orders posted an unexpected monthly jump, but demand still trails historical norms as fleets prioritize power units over trailing equipment.

Read More →

Autonomous Start-Up Humble Announces Cabless Autonomous Electric Hauler

A new autonomous truck startup company is targeting yard, port, and short-haul freight with a lighter, fully autonomous platform designed for dock-to-dock moves.

Read More →

Top Green Fleets of 2026: Nomination Deadline Extended

Is your company a leader in sustainability efforts among trucking fleets? If so, Heavy Duty Trucking's editors want to hear from you.

Read More →